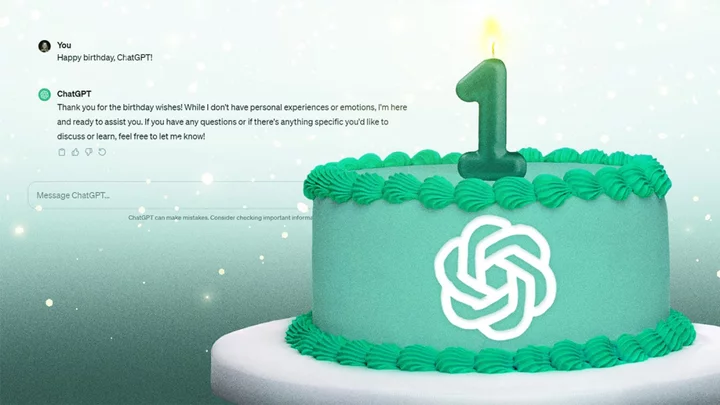

What happens when an unexpectedly powerful technology becomes an overnight sensation, quickly making its way into schools, businesses, and the dark corners of the web, with few laws or social norms to guide it? It's the story of ChatGPT, which has now been answering our questions (with a few hallucinations along the way) for a year.

OpenAI's chatbot has dominated tech headlines since its Nov. 30, 2022, debut. Two months in, it was the fastest growing app of all time, lighting a fire under dozens of competitors and catching the eyes of investors, regulators, and copyright holders alike.

Its impact was on full display earlier this month after the shock dismissal of Sam Altman. Tech CEOs come and go, but OpenAI had become a big enough player for Microsoft CEO Satya Nadella to get involved and for OpenAI employees to threaten mass resignations unless Altman returned. He was quickly reinstated.

Meanwhile, ChatGPT entered the zeitgeist. "I asked ChatGPT to help me write this" became the joke of the year for public speeches, for better or worse.

Whether you've been following ChatGPT's every twist and turn or are wondering if it's time to try it out, here's what to know about the chatbot's explosive first year and what to expect in 2024.

1. ChatGPT Does Not 'Understand' Things

Much of 2023 was spent testing ChatGPT to see what it can do, and initially, its comprehensive answers surprised us all. How does it know that? As it turns out, it doesn't...really.

When you ask ChatGPT a question, it sifts through troves of training data, plus previous conversations with other users, to identify patterns and figure out what words you most likely want to see. Large language models (LLMs) assign a score to each word in the sentence, based on the probability that they are correct, as the New York Times explains. ChatGPT's image-reading capability follows the same principle.

This approach to language uses a framework created by the late Claude Shannon, a computer scientist dubbed the “father of information theory." Shannon divided language into two parts—syntax (its structure) and semantics (its content, or meaning). ChatGPT operates purely on syntax, using math, plus user feedback, to avoid knowing the semantics.

Sometimes it fails, leading to so-called "hallucinations," or nonsensical responses. That happens when the model “perceives patterns or objects that are nonexistent or imperceptible to human observers,” IBM says. Still, for a first-gen product, ChatGPT works incredibly well.

2. It's Best Used As a Helpful Starting Point

People are using ChatGPT in countless ways across industries, but there's one thing it's really good at: Helping you get started on a project.

That’s because it’s much easier to react to someone else’s ideas than to come up with your own. For example, let’s say you’re writing a tough email, such as telling your family you won’t be able to attend holiday celebrations. It’s easier to edit an email template that ChatGPT creates, with suggested phrases to soften the blow, than to start from scratch.

(Credit: OpenAI/Emily Dreibelbis)The same principle applies to any task, such as creating a packing list for a hiking trip. However, we’ve also learned that we can’t rely on ChatGPT to complete a task from start to finish. Its outputs require editing and fact-checking every time, and over-reliance on ChatGPT can get you into serious trouble. Software engineers working at Samsung, for example, got busted for feeding it proprietary code. And a lawyer cited fictional cases that ChatGPT made up in a legal brief.

For sensitive matters and niche fields, it's generally best to train a private chatbot on a fixed set of reliable data. Or just use it for casual brainstorming and do the majority of the work yourself.

3. With an Election Looming, Misinformation Abounds

Merriam-Webster's 2023 Word of the Year is authentic, because a proliferation of AI-generated images, text, video, and audio means "the line between 'real' and 'fake' has become increasingly blurred." While ChatGPT does not generate video or audio, it offers a free way to quickly create text that can be posted online, sent in a text, or used as a script for a propaganda video.

Everyone from bad actors, everyday citizens, and respected corporations dabbled in AI content creation this year. Meta released eerily convincing AI-generated characters based on Kendall Jenner, Snoop Dogg, and Tom Brady. But problematic faux images were everywhere, from scenes of Donald Trump being "arrested" (above) to videos of a car "burning" (below).

With the 2024 presidential election coming up in the US, voters should take extra steps to ensure they are consuming accurate information—especially given the misinformation campaign Russia waged during the 2016 election, before AI tools were so widely available. Slovenia already had issues with AI campaigns influencing its elections when an audio deepfake of a candidate circulated on Facebook, the Coalition for Women in Journalism reports.

The best way to tell if something is real? Always consult primary sources, or, better yet, get off the computer and see it with your own eyes.

4. Regulatory Efforts Are Piecemeal, But Evolving

(Credit: Bloomberg / Contributor)In Washington, ChatGPT has added another layer to issues that lawmakers were already struggling to tackle, from disinformation and the future of work in the age of automation to how much of our personal data corporations should be able to access.

A court has already ruled that AI-created art cannot be copyrighted; a human needs to be involved. But chatbots like ChatGPT are build on publicly available information scraped from the internet, among other sources, which has led to a flood of lawsuits accusing tech companies of using copyrighted material to build their chatbots without compensating artists. In Hollywood, a big part of this year's strikes was the use of AI to write scripts or generate actors onscreen.

OpenAI now lets artists exclude their work from the model's training. Online publishers can also add a line of code to block OpenAI's web crawlers. But when it comes to regulation, lawmakers are divided on how to do it. Do we target the AI tools themselves, their creators, or the people who use them?

The EU gave it a shot this year with the AI Act. It includes a long list of provisions, such as a formal process for government bodies to review "high-risk" AI systems and confirm they have safety measures in place. Another clause requires anyone who uses AI to create a compelling deepfake of another person to disclose that it is AI-generated.

In the US, the Biden administration released an Executive Order on AI in October. It requires government agencies to work on guidelines for controlling deepfakes, frameworks for how to exclude private information from AI training data, and protocols to reduce AI-generated robocalls and texts, and more. Thus far, however, AI legislation has seen little traction in Congress.

5. In 2024, AI Will Be Hard to Avoid, Especially at Work

Despite the complexity, AI is clearly here to stay for the foreseeable future, especially at work and school. Harvard University built a chatbot for student instruction. Zoom and Webex are using it to transcribe meetings, and doctors are doing the same for medical notes. Google is finding more ways to inject AI into Gmail. The list goes on.

Custom AIs, or chatbots built for a specific purpose, are also on the rise. OpenAI launched its new "GPTs" this fall, which allows anyone with a ChatGPT Plus account ($20 per month) to create one. Microsoft is also adding GPTs to its Copilot products, and tech-savvy individuals are building off open-source models, such as Meta's Llama 2.

It remains to be seen how much of this sticks, especially in our personal lives (beyond auto-completed texts and emails, that is). But it seems like the industry thinks that having more customized, smaller AIs could help shift the perception of AI as a potentially apocalyptic tool into something useful (and lucrative).